In the ever-changing world of technology and retai...

news-extra-space

Text-to-image generators have increased since the release of DALL-E. Both Google and Meta immediately acknowledged that they had been working on comparable systems but claimed that their models needed to be more suitable for the general public. Rival start-ups immediately went public, including Stable Diffusion and Midjourney, which won an art competition at the Colorado State Fair in August and produced the artwork that generated debate.

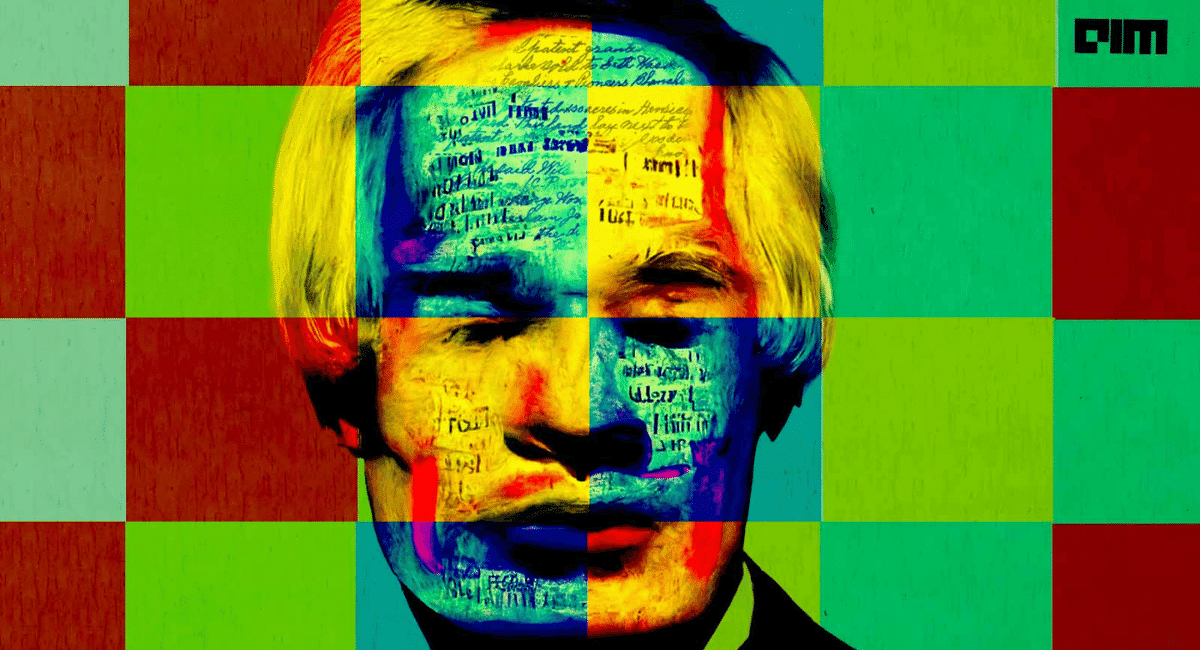

The technology is advancing quickly, outpacing the ability of AI businesses to establish usage rules and avert harmful results. For example, researchers are concerned that the images created by these algorithms may reinforce racial and gender stereotypes or plagiarize artists whose work has been taken without their permission. In addition, fake pictures might be used to encourage bullying and harassment or to spread false information that appears to be true.

Text-to-image generators have increased since the release of DALL-E. Both Google and Meta immediately acknowledged that they had been working on comparable systems but claimed that their models needed to be more suitable for the general public. Rival start-ups immediately went public, including Stable Diffusion and Midjourney, which won an art competition at the Colorado State Fair in August and produced the artwork that generated debate.

The technology is advancing quickly, outpacing the ability of AI businesses to establish usage rules and avert harmful results. For example, researchers are concerned that the images created by these algorithms may reinforce racial and gender stereotypes or plagiarize artists whose work has been taken without their permission. In addition, fake pictures might be used to encourage bullying and harassment or to spread false information that appears to be true.

Moreover, an openAI has attempted to strike a balance between its desire to lead the way and advertise its AI advancements without hastening these risks. For instance, OpenAI forbids the usage of photos of politicians or celebrities to prevent DALL-E from being used to spread misinformation. However, Sam Altman, the CEO of OpenAI, argues the choice to make DALL-E available to the general public is a crucial step in developing the technology securely.

Moreover, an openAI has attempted to strike a balance between its desire to lead the way and advertise its AI advancements without hastening these risks. For instance, OpenAI forbids the usage of photos of politicians or celebrities to prevent DALL-E from being used to spread misinformation. However, Sam Altman, the CEO of OpenAI, argues the choice to make DALL-E available to the general public is a crucial step in developing the technology securely.

Leave a Reply