In the ever-changing world of technology and retai...

news-extra-space

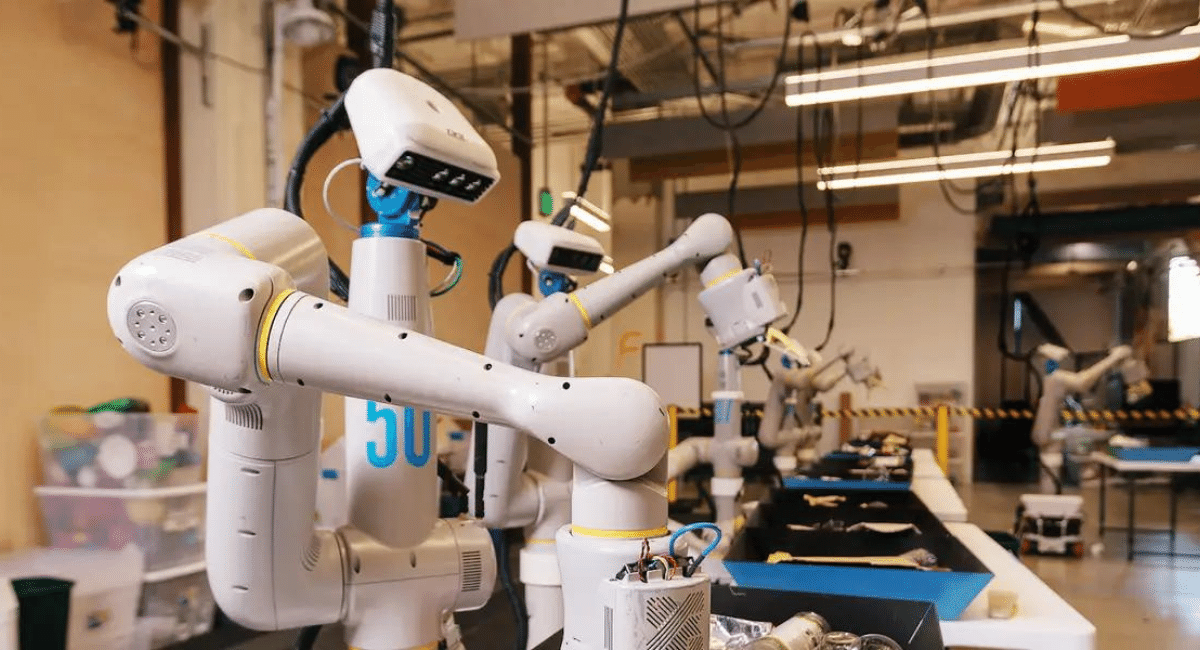

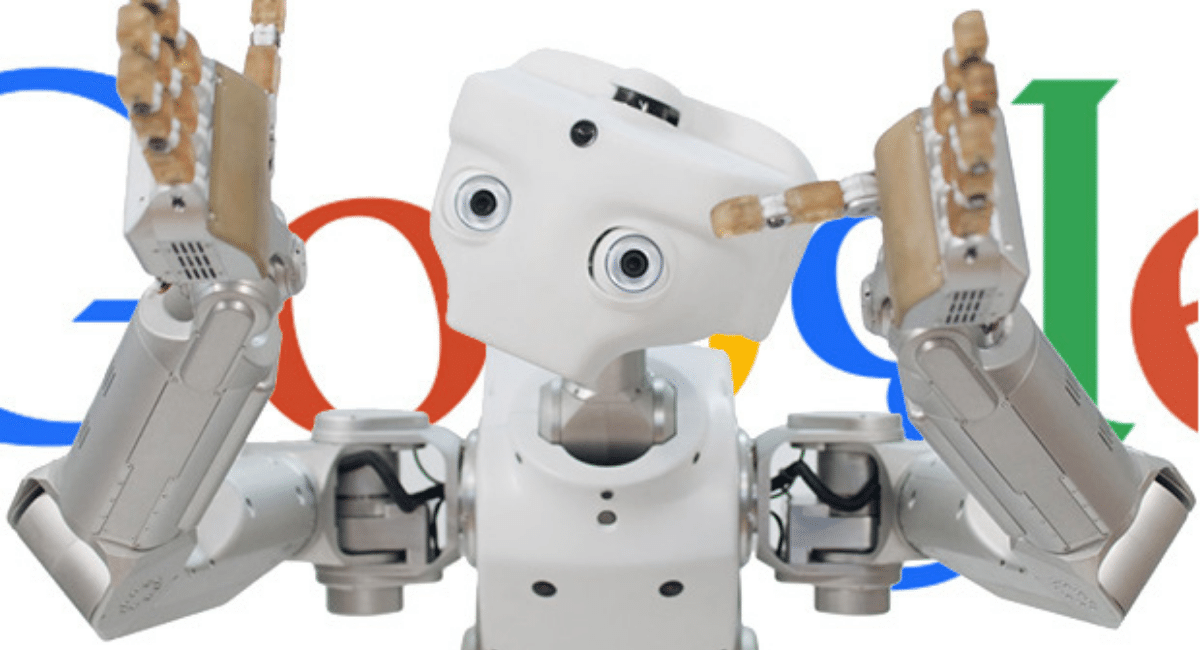

During the demonstration in Google's robotics lab in Mountain View, California, the most impressive thing was that no human coder had been involved in programming the Robot. However, the millions of pages of text scraped from the web were used to teach the control software how to translate a spoken word into physical actions.

Moreover, A person doesn't need to use specific endorsed words to issue commands, unlike virtual assistants such as Alexa or Siri. The most impressive thing is that tell the Robot, "I'm thirsty," and it will try to get you something to drink; tell it, "Whoops, I just spilled my drink," and it will return with a sponge.

During the demonstration in Google's robotics lab in Mountain View, California, the most impressive thing was that no human coder had been involved in programming the Robot. However, the millions of pages of text scraped from the web were used to teach the control software how to translate a spoken word into physical actions.

Moreover, A person doesn't need to use specific endorsed words to issue commands, unlike virtual assistants such as Alexa or Siri. The most impressive thing is that tell the Robot, "I'm thirsty," and it will try to get you something to drink; tell it, "Whoops, I just spilled my drink," and it will return with a sponge.

According to Karol Hausman, a senior research scientist at Google, robots need to be able to adapt and learn from their experiences in the real world. In the demo, the Robot also brought a sponge to clean up a spill, making it stand out from the others. In addition, to human interactions, machines must learn how words can be arranged in various ways to produce different meanings. Hausman said that the Robot must understand all the subtleties and intricacies of language.

According to Karol Hausman, a senior research scientist at Google, robots need to be able to adapt and learn from their experiences in the real world. In the demo, the Robot also brought a sponge to clean up a spill, making it stand out from the others. In addition, to human interactions, machines must learn how words can be arranged in various ways to produce different meanings. Hausman said that the Robot must understand all the subtleties and intricacies of language.

In Google's demonstration, robots were demonstrated to interact with humans in complex environments, a longstanding goal. In addition, researchers have discovered that feeding large amounts of text into large machine learning models can yield programs with impressive language skills, including OpenAI's GPT-3 text generator. Through digesting online writing, the software can produce readable articles on a subject, summarize or answer questions about the text, or hold coherent conversations.

Google and other Big Tech firms extensively use large language models for search and advertising. In addition, several companies have made cloud APIs available, and new services have been developed for tasks like generating code and writing advertising copy.

AI Programs can still be confused or regurgitate gibberish despite all the progress. As a result, models trained with web text lack a sense of truth and often reproduce biases and hateful language in their training data. This suggests that careful engineering may be necessary to guide a robot without letting it run amok.

In Google's demonstration, robots were demonstrated to interact with humans in complex environments, a longstanding goal. In addition, researchers have discovered that feeding large amounts of text into large machine learning models can yield programs with impressive language skills, including OpenAI's GPT-3 text generator. Through digesting online writing, the software can produce readable articles on a subject, summarize or answer questions about the text, or hold coherent conversations.

Google and other Big Tech firms extensively use large language models for search and advertising. In addition, several companies have made cloud APIs available, and new services have been developed for tasks like generating code and writing advertising copy.

AI Programs can still be confused or regurgitate gibberish despite all the progress. As a result, models trained with web text lack a sense of truth and often reproduce biases and hateful language in their training data. This suggests that careful engineering may be necessary to guide a robot without letting it run amok.

Google's most potent language model, PaLM, powered Hausman's Robot. In addition to explaining in natural language how it comes to a particular conclusion, it can perform many tricks. A sequence of steps for a robot's performance is generated using the same approach.

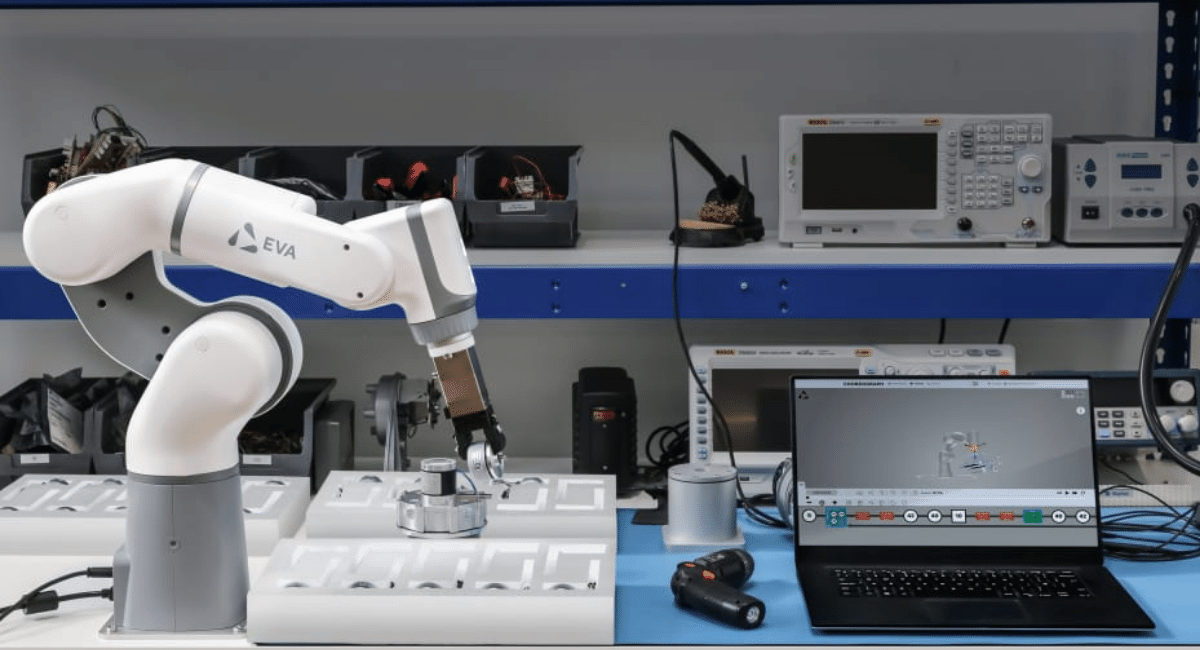

Google Researchers currently work with the Hardware from Everyday Robots (A company lasts for a long time of Google parent Alphabet's X division devoted to the MOONSHOT research projects to make the Robot butler). In addition to this project, they introduced a new program that can work on the text processing of PaLM. As a result, it translates into a spoken command or phrase to take appropriate actions such as "Pick up the chips" or "Open drawer" performed by the Robot.

Google's most potent language model, PaLM, powered Hausman's Robot. In addition to explaining in natural language how it comes to a particular conclusion, it can perform many tricks. A sequence of steps for a robot's performance is generated using the same approach.

Google Researchers currently work with the Hardware from Everyday Robots (A company lasts for a long time of Google parent Alphabet's X division devoted to the MOONSHOT research projects to make the Robot butler). In addition to this project, they introduced a new program that can work on the text processing of PaLM. As a result, it translates into a spoken command or phrase to take appropriate actions such as "Pick up the chips" or "Open drawer" performed by the Robot.

The Robot's library for physical actions training is done via another activity. The process includes how humans remotely controlled the remote to do any work like pick up the things you commanded. However, the Robot has limited tasks that can perform all functionalities within its network. Therefore, this language model will help to prevent misunderstandings and errant behavior.

PaLM's language skills help robots to make sense of and understand relatively abstract commands. Stefanie Tellex (An Assistant professor at the brown university who works in the robot-learning and robot-human collaboration) said, "Applying large language models to the Robotis is an electrifying direction." Additionally, she adds the more significant number of ranges that robots can perform. It will help them to do more things and resolve a largely unsolved problems.

The Robot's library for physical actions training is done via another activity. The process includes how humans remotely controlled the remote to do any work like pick up the things you commanded. However, the Robot has limited tasks that can perform all functionalities within its network. Therefore, this language model will help to prevent misunderstandings and errant behavior.

PaLM's language skills help robots to make sense of and understand relatively abstract commands. Stefanie Tellex (An Assistant professor at the brown university who works in the robot-learning and robot-human collaboration) said, "Applying large language models to the Robotis is an electrifying direction." Additionally, she adds the more significant number of ranges that robots can perform. It will help them to do more things and resolve a largely unsolved problems.

Another Researcher, brain Ichter (Scientist At Google), tangled with many projects related to Robotics but was still confused with the Google Kitchen Robot because it is very challenging for the machine to grasp any object correctly.

Still, many are unclear about how the system would handle complex commands or sentences. Isn't it challenging to understand the short sentence, or do we need to command with a limited set of sequence statements? In addition, AI Advances Expanded The Abilities of Robots. For example, Industrial robots can quickly identify the products or spot defects in the spot unit. Many researchers are still working and exploring ways for robots to learn via practice, simulation, or observation. But, most of the demos come with impressive results. Despite all benefits, European legislators are moving toward passing an ‘Artificial Intelligence Act’ for the region to protect the public against its adverse effects.

Another Researcher, brain Ichter (Scientist At Google), tangled with many projects related to Robotics but was still confused with the Google Kitchen Robot because it is very challenging for the machine to grasp any object correctly.

Still, many are unclear about how the system would handle complex commands or sentences. Isn't it challenging to understand the short sentence, or do we need to command with a limited set of sequence statements? In addition, AI Advances Expanded The Abilities of Robots. For example, Industrial robots can quickly identify the products or spot defects in the spot unit. Many researchers are still working and exploring ways for robots to learn via practice, simulation, or observation. But, most of the demos come with impressive results. Despite all benefits, European legislators are moving toward passing an ‘Artificial Intelligence Act’ for the region to protect the public against its adverse effects.

The Goal of Google research is to work on the long-term project from being a product. Many rival companies show a new interest in home robots in this field. Late Last September, Amazon demonstrated Astro, a home robot with much more limited abilities. This month, Company announced its plans to buy an iRobot. This iRobot company is well-known for its most popular Roomba robot vacuum cleaner. Moreover, Elon musk reveals that Tesla will soon build a humanoid robot.

The Goal of Google research is to work on the long-term project from being a product. Many rival companies show a new interest in home robots in this field. Late Last September, Amazon demonstrated Astro, a home robot with much more limited abilities. This month, Company announced its plans to buy an iRobot. This iRobot company is well-known for its most popular Roomba robot vacuum cleaner. Moreover, Elon musk reveals that Tesla will soon build a humanoid robot.

Leave a Reply