(Image credit- ABC News) With the development o...

news-extra-space

By additionally testing the identical ad set on YouTube, Global Witness discovered that this is a problem that is particularly significant on Facebook—not necessarily a shortcoming of all ad-based social networks. YouTube did not authorize any of the advertising, in contrast to Facebook. YouTube also went one step farther and suspended the user accounts that tried to publish the adverts.

According to Sharpe, "YouTube's far greater response shows that the test we set is doable to pass."

Meta or YouTube was unable to be contacted for comment. Naomi Hirst, the leader of Global Witness' digital threats campaign told that it was difficult to have any faith in Facebook to take the required steps to stop the spread of misinformation and hatred on its platform. Our investigations have shown several failures, despite their assurances that they take this matter seriously.

By additionally testing the identical ad set on YouTube, Global Witness discovered that this is a problem that is particularly significant on Facebook—not necessarily a shortcoming of all ad-based social networks. YouTube did not authorize any of the advertising, in contrast to Facebook. YouTube also went one step farther and suspended the user accounts that tried to publish the adverts.

According to Sharpe, "YouTube's far greater response shows that the test we set is doable to pass."

Meta or YouTube was unable to be contacted for comment. Naomi Hirst, the leader of Global Witness' digital threats campaign told that it was difficult to have any faith in Facebook to take the required steps to stop the spread of misinformation and hatred on its platform. Our investigations have shown several failures, despite their assurances that they take this matter seriously.

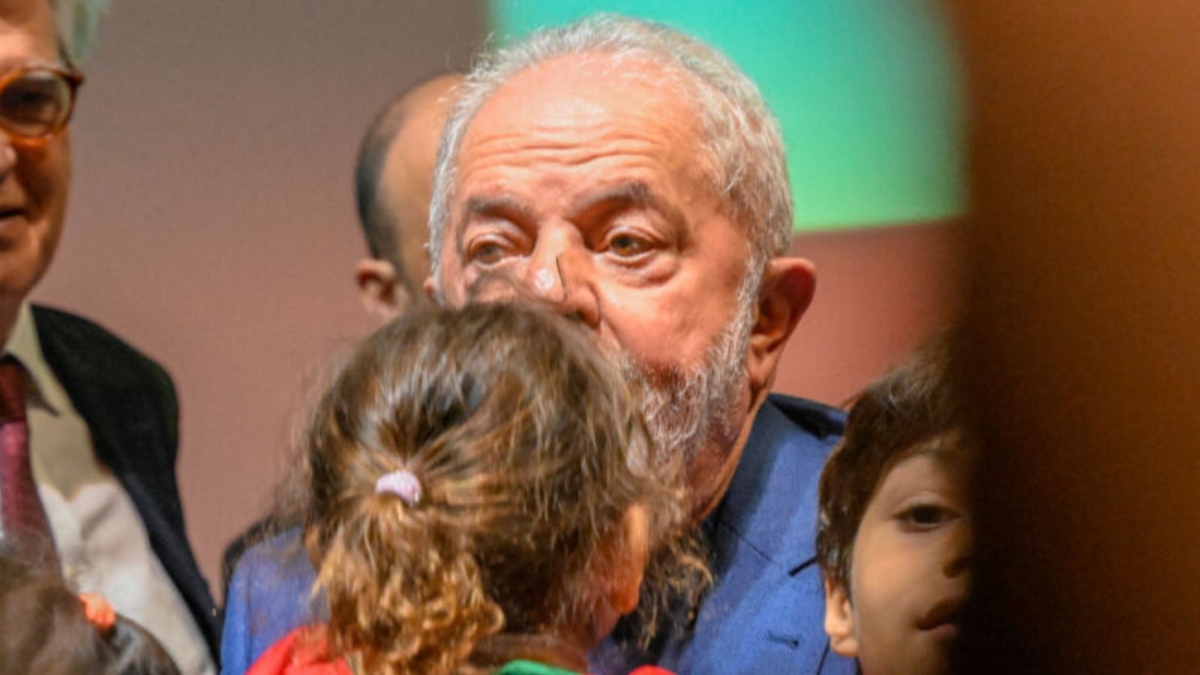

Photo Credit: Reuters[/caption]

As we've previously stated, before the Brazilian election last year, we removed hundreds of thousands of pieces of content that broke our policies against incitement to violence and rejected tens of thousands of ad submissions before they ran, according to a Meta spokesperson quoted in Global Witness' press release. We constantly improve our processes to implement our policies at scale. To help keep our platforms secure from misuse, we rely on teams and technology.

Global Witness has advised Facebook and all other social media companies to commit to stepping up content-moderation efforts with the same vigor shown after the US attacks, after a recent test seemed to show Facebook was exerting less effort to stop violence-inciting content from igniting civil unrest in Brazil.

According to Sharpe, "Global Witness is urging Facebook and other social media companies to immediately put in place 'break the glass' measures and publicly disclose what else they are doing to stop the spread of false information and incitement to violence in Brazil, as well as how they are funding those efforts.

Photo Credit: Reuters[/caption]

As we've previously stated, before the Brazilian election last year, we removed hundreds of thousands of pieces of content that broke our policies against incitement to violence and rejected tens of thousands of ad submissions before they ran, according to a Meta spokesperson quoted in Global Witness' press release. We constantly improve our processes to implement our policies at scale. To help keep our platforms secure from misuse, we rely on teams and technology.

Global Witness has advised Facebook and all other social media companies to commit to stepping up content-moderation efforts with the same vigor shown after the US attacks, after a recent test seemed to show Facebook was exerting less effort to stop violence-inciting content from igniting civil unrest in Brazil.

According to Sharpe, "Global Witness is urging Facebook and other social media companies to immediately put in place 'break the glass' measures and publicly disclose what else they are doing to stop the spread of false information and incitement to violence in Brazil, as well as how they are funding those efforts.

Leave a Reply