In recent decades, scores of have used the theme ...

news-extra-space

Source: Wallpapers in 4k[/caption]

We chatted with one of the paper's co-authors, Adam Gleave, a Ph.D. candidate at UC Berkeley, to further understand this accomplishment and its consequences. Gleave created what AI researchers refer to as a "adversarial policy" along with co-authors Tony Wang, Nora Belrose, Tom Tseng, Joseph Miller, Michael D. Dennis, Yawen Duan, Viktor Pogrebniak, Sergey Levine, and Stuart Russell. The researchers' strategy in this example combines a neural network with a tree-search technique (known as Monte-Carlo Tree Search) to find Go moves.

World-class AI for KataGo picked up Go by competing against itself in countless games. The fact that there isn't enough experience to cover every case creates vulnerabilities due to unforeseen behavior. Despite the fact that KataGo adapts well to numerous unique strategies, Gleave notes that the further disconnected it is from the training games, the weaker it becomes. There are probably many other such "off-distribution" strategies, but our adversary has identified one that KataGo is particularly susceptible to.

Source: Wallpapers in 4k[/caption]

We chatted with one of the paper's co-authors, Adam Gleave, a Ph.D. candidate at UC Berkeley, to further understand this accomplishment and its consequences. Gleave created what AI researchers refer to as a "adversarial policy" along with co-authors Tony Wang, Nora Belrose, Tom Tseng, Joseph Miller, Michael D. Dennis, Yawen Duan, Viktor Pogrebniak, Sergey Levine, and Stuart Russell. The researchers' strategy in this example combines a neural network with a tree-search technique (known as Monte-Carlo Tree Search) to find Go moves.

World-class AI for KataGo picked up Go by competing against itself in countless games. The fact that there isn't enough experience to cover every case creates vulnerabilities due to unforeseen behavior. Despite the fact that KataGo adapts well to numerous unique strategies, Gleave notes that the further disconnected it is from the training games, the weaker it becomes. There are probably many other such "off-distribution" strategies, but our adversary has identified one that KataGo is particularly susceptible to.

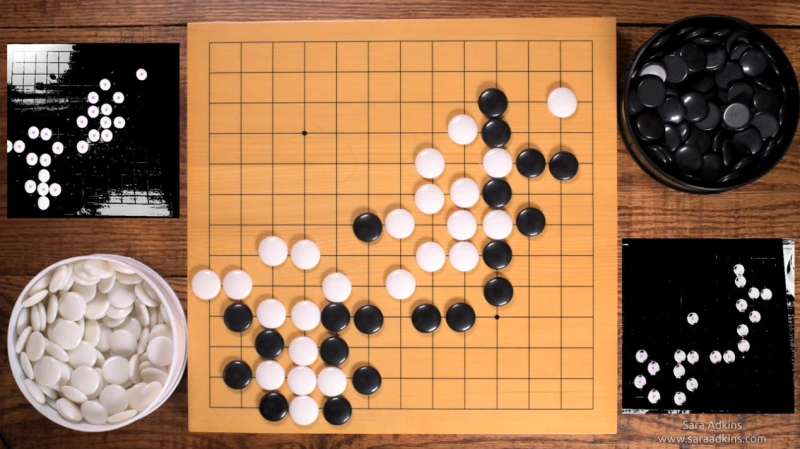

According to Gleave, the adversarial policy operates during a Go match by initially asserting claim to a tiny portion of the board. He included a link to an illustration where the opponent, who controls the black stones, predominantly plays in the top-right corner of the board. While playing a few stones that are simple to take in that area, the opponent lets KataGo (playing white) claim the remaining portions of the board.

Despite these cunning deceptions, the adversarial policy is not all that effective in Go. In fact, even inexperienced humans may readily defeat it. Instead, the adversary's only goal is to exploit a previously unknown KataGo vulnerability. This work has significantly wider ramifications because practically any deep-learning AI system could experience a situation like this.

According to Gleave, the adversarial policy operates during a Go match by initially asserting claim to a tiny portion of the board. He included a link to an illustration where the opponent, who controls the black stones, predominantly plays in the top-right corner of the board. While playing a few stones that are simple to take in that area, the opponent lets KataGo (playing white) claim the remaining portions of the board.

Despite these cunning deceptions, the adversarial policy is not all that effective in Go. In fact, even inexperienced humans may readily defeat it. Instead, the adversary's only goal is to exploit a previously unknown KataGo vulnerability. This work has significantly wider ramifications because practically any deep-learning AI system could experience a situation like this.

According to research, AI systems that appear to operate at a human level frequently do so in a very alien manner, which can lead to failures that are unexpected to humans, says Gleave. While similar failures in safety-critical systems could be dangerous, this Go result is amusing.

Imagine a self-driving car AI that runs into a highly improbable situation that it didn't anticipate, allowing a human to manipulate it into engaging in risky activities, for example. Better automated testing of AI systems is required, according to Gleave, "not simply to assess average-case performance, but to uncover worst-case failure modes."

The classic game of Go continues to have an important influence on machine learning more than five years after artificial intelligence (AI) eventually beat the greatest human players. Once widely used, knowledge of the go-playing AI's flaws might even result in life-saving measures.

According to research, AI systems that appear to operate at a human level frequently do so in a very alien manner, which can lead to failures that are unexpected to humans, says Gleave. While similar failures in safety-critical systems could be dangerous, this Go result is amusing.

Imagine a self-driving car AI that runs into a highly improbable situation that it didn't anticipate, allowing a human to manipulate it into engaging in risky activities, for example. Better automated testing of AI systems is required, according to Gleave, "not simply to assess average-case performance, but to uncover worst-case failure modes."

The classic game of Go continues to have an important influence on machine learning more than five years after artificial intelligence (AI) eventually beat the greatest human players. Once widely used, knowledge of the go-playing AI's flaws might even result in life-saving measures.

Leave a Reply