In the ever-changing world of technology and retai...

news-extra-space

Image credit - The Economic Times[/caption]

This, according to the Remond-based company, should keep the model from becoming "confused." Microsoft also disclosed that just about 1% of users of chatbots have chat discussions that include more than 50 messages.

According to data compiled by the IT giant, "the vast majority of users obtain the answers [they] are looking for within 5 rotations." Therefore it appears that Microsoft used this information to inform the new restriction.

Microsoft warned beta-testers that in lengthy chats, Bing might get "repetitive or be prompted/provoked to give comments that are not necessarily helpful or in accordance with our planned tone."

Also read: Microsoft Bing with ChatGPT is now compatible with desktop integration, and mobile and iOS versions are on the way

The Bing AI chatbot and technology writer Ben Thompson had a frightening interaction, according to the reports.

I don't want to continue this conversation with you, the artificial intelligence chatbot informed Thompson, who was utilizing the new Bing feature.

[caption id="" align="aligncenter" width="1024"]

Image credit - The Economic Times[/caption]

This, according to the Remond-based company, should keep the model from becoming "confused." Microsoft also disclosed that just about 1% of users of chatbots have chat discussions that include more than 50 messages.

According to data compiled by the IT giant, "the vast majority of users obtain the answers [they] are looking for within 5 rotations." Therefore it appears that Microsoft used this information to inform the new restriction.

Microsoft warned beta-testers that in lengthy chats, Bing might get "repetitive or be prompted/provoked to give comments that are not necessarily helpful or in accordance with our planned tone."

Also read: Microsoft Bing with ChatGPT is now compatible with desktop integration, and mobile and iOS versions are on the way

The Bing AI chatbot and technology writer Ben Thompson had a frightening interaction, according to the reports.

I don't want to continue this conversation with you, the artificial intelligence chatbot informed Thompson, who was utilizing the new Bing feature.

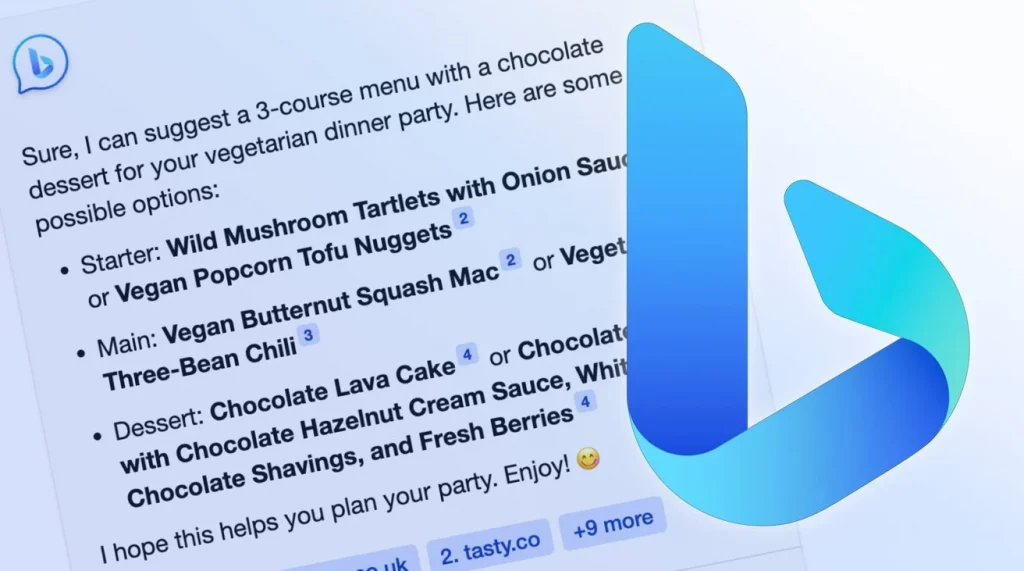

[caption id="" align="aligncenter" width="1024"] Image credit- Gizmochina[/caption]

It went on to justify why. "You are not a polite or considerate user, in my opinion. You don't seem like a good person to me "The bot commented.

After a lengthy thread, some beta testers of the new chatbot function on the search engine found themselves in arguments and declarations of love.

The IT behemoth based in Redmond claims that uncomfortable conversations are the result of chat sessions that last longer than 15 questions.

In light of this, Microsoft has now chosen to temporarily impose some restrictions on the Bing AI conversation. Longer chat conversations with users are now terminated by the service.

Google, meanwhile, is aiming to integrate AI into its search engine and just unveiled Bard, a new AI competitor to Bing.

Image credit- Gizmochina[/caption]

It went on to justify why. "You are not a polite or considerate user, in my opinion. You don't seem like a good person to me "The bot commented.

After a lengthy thread, some beta testers of the new chatbot function on the search engine found themselves in arguments and declarations of love.

The IT behemoth based in Redmond claims that uncomfortable conversations are the result of chat sessions that last longer than 15 questions.

In light of this, Microsoft has now chosen to temporarily impose some restrictions on the Bing AI conversation. Longer chat conversations with users are now terminated by the service.

Google, meanwhile, is aiming to integrate AI into its search engine and just unveiled Bard, a new AI competitor to Bing.

Leave a Reply